3 powerful ways to transform your training with data, research and insights

At Everest Virtual Conference 2021, the training industry got to experience an insightful talk from Chris Martin, CMO of FlexMR.

%20(1)-1.png?width=109&name=New%20Project%20(6)%20(1)-1.png) Chris Martin, CMO at FlexMR

Chris Martin, CMO at FlexMR

Watch the webinar:

This blog summarises the key takeaways from Chris's talk at Everest Conference 2021. Direct quotes have been altered to aid the flow of content.

The evolving insights landscape

4 ways customers, behaviours and markets are evolving:

- Markets are growing - 30,000 new products and services were launched in 2020, in the UK alone

- Behaviours are evolving - 75% of consumers tried a new shopping habit in 2020

- Competition is increasing - The #1 challenge for business leaders is creating business impact

- Pressures are mounting - 44% of marketing leaders expect budgets to shrink

Over the past couple of years, we've all felt this 'increasing pressure'

The data dichotomy

.jpg?width=581&name=New%20Project%20(26).jpg)

Do you suffer from analysis paralysis?

Having so much data, you don't know what to do with it - you simply don't know where to start.

There's so much you can track, and so many acronyms floating around such as NPS (Net Promoter Score), CXR (Customer Experience Research), Customer Feedback Initiatives, Customer Effort Scores. Understanding where to start and what's going to be important, and what data is needed to make effective decisions, can be quite difficult.

5 golden questions to turn your course data in to action

Chris outlines 5 key questions that can help your business to turn that unstructured qualitative and quantitative words and numbers in to meaningful insights that help us make decisions.

Q1. What is most important for my business to know?

The answer to this question could be any number of things.

A common one in the training industry may relate to customer experience - 'What is the customer experience, how good is the customer experience?"

It could be around revenue growth, or even training delivery and how we interface with customers.

Q2. How can I find out or measure my business against what I need to know?

This question can help us work out what kind of research and data we're going to need.

As an example, it's not a huge surprise to learn recommendations are a key driver for business success and new growth for training businesses. Recommendations are measured by Net Promoter Score. Therefore, if we want to increase commendations, NPS is the measurement tool we want to use.

Q3. What data do I already have access to that can contribute to the answer?

This question is often skipped.

There's a huge amount of data locked up in businesses already, such as in CRMs and spreadsheets - whether that's customer data, interactions, individual trainer data such as feedback on delivering courses or material. Figuring out what we already have access to is an important step.

Q4. What data do I need to collect to create a complete answer?

More on this shortly!

Q5. What actions am I willing to take based on my findings?

This answer to this might require radical action, and it's important to ask at the outset of any research project - "Am I willing to take that action?"

If we're not willing to take the action, it's not worth investing in the research in the first place.

The four horsemen of data research

Quantitative

Quantitative data relates to numbers and statistics - data that we can put a tangible measure on. The quantitative data could help us answer the 'what' of a question - ie. to look at what effect is happening

Qualitative

Anything related to words. Often hard to measure, this relates to thoughts, feelings and emotions. This helps us to answer the 'why' of a question - what attitudes and behaviours are driving the quantitative data above.

Exploratory

When we don't necessarily know what we're looking for, we want to go to the market and find out something new. This could be a new course offering, or a new product line, or exploring what customers or employees want from us.

If we don't know the answer to that question, we're going out and exploring what the answer might be.

Explanatory

Explanatory is all about explaining the effects of something that has happened. So, if we observe something, such as a dip in NPS - we want to know why that's happened, we want an explanation of the effect.

Three types of research

Chris outlined 3 examples of how training providers can undertake useful research and extract meaningful insights.

Example #1: Focus Groups & Interviews

For exploratory stages such as new course development, product or service development.

Focus groups

Focus groups are essentially getting a bunch of people together. Pre-COVID, this would typically be carried out in-person, post-COVID we're of course seeing much high use of online tools such as Zoom.

Focus groups are most effective if there is a maximum of 6 or so participants, as it's important to give everybody enough time to speak, express their opinions, and get to know each other.

The aim of a focus group is to ask participants directed questions on a particular topic, therefore it's important to go in to the session with an outline, or topic guide of questions (and answers) that we want to find out.

The questions will of course be determined by what you are looking to find out, however a few example questions useful as a training provider can be seen below:

- What is it about our current training offering that you like?

- What's missing? Why is that missing?

- What would it mean to you, to have access to that particular type of training?

- Can you think of any arguments against why we might offer that?

We should come out of this with a pretty robust and in-depth answer to what we want to develop, but in order to get there there's a few things we're going to need.

Controlled stimuli

Know when to introduce stimuli to the group and how to control conversation when it's live.

Controlled stimuli relates to the resources we're going to use throughout the focus group or interview. It could be images, such as training materials if you're already in that development stage. Perhaps you want to share these and get some specific feedback on the direction of future content. It could be a promotional video, or audio clip, written document - anything you want to share, make sure it's included and given consideration within that topic guide.

Asking group questions

Engaging the whole group in key questions that are collaborative and require discussion.

It's useful to consider both group questions as well as individual questions. Group questions are used to stimulate debate and discussion and get everyone's opinion, drawing out the differences between people.

Asking individual questions

Narrowing in on individual responses to probe further into a subject or topic.

Individual questions are used to hone in on certain individuals opinions, particularly ones that are worth 'pulling on a thread for' to understand a unique point of view in more detail. Balancing individual and group questions is a real skill, but is key in order to get the most out of your course research.

Countering group think

Understanding the difference between similar views and the effects of group think.

Group think is an important consideration during a focus group, and something we want to avoid. This is an effect where the more dominant members of a focus group might start to outweigh the less forthcoming members, and slowly the less dominant members shift their opinions to think with the group. Of course, it is possible to have a similar opinion without engaging in group think, but it needs to be recognised that this is a possibility and to be mindful of when this occurs, and attempt to counter it.

Show of hands

Adding a quant element and using the results to stimulate further discussion.

A show of hands, or a quick poll, can be particularly effective in focus groups. Asking a live question, getting that quantitative data in response, then using that can fuel further discussion.

The benefits of in-depth interviews

Group bias and group think have been a long running issue with focus groups, something that can be avoided with interviews. This is a great opportunity to essentially understand one opinion, in depth. If we're going to achieve the same scale we might through a focus group, we're going to need to run multiple interviews - we're going to need to speak to 5 or 6 people individually, and that's going to extend the length of our research process.

Despite being recognised as the 'purest' form of research by some, running multiple interviews are often associated with the highest costs and lengthy time scales. This can be overcome using online tools, making the process far more efficient and removing any travel costs and delays. Virtual rooms also facilitate the inclusion of stakeholders or observers to attend and watch customer feedback unfold in front of them.

It's important not to underestimate the power focus groups and interviews can have as a political tool in driving change.

Example #2: Diaries & Ethnographies

For individual and personal qualitative data, such as understanding journey experiences.

Whereas focus groups and interviews are usually a single point in time, where a research project would reach a natural conclusion, diaries and ethnographies tend to take on a more longitudinal nature. They tend to run for a much longer period. We might consider using such a study for gathering course feedback, especially if we're running a course over 1, 2 or more months. We want to see the points in that quite lengthy journey; which are highlights, what's a lowlight, what are the experiences and emotions that people go through at various points, and what are the opportunities to improve?

Diaries |

|

|

|

|

|

|

|

Best practice for diary studies

- Consider where, when and why participants will be making diary entries

- Ask both quantitative and qualitative questions to understand 'what' and 'why'

- Include space for directed and free-form feedback

- Emphasise aspects of anonymity and privacy to ensure participants and comfortable sharing comments

- Look for themes that emerge over time within individuals entries and across groups

Example #3: Surveys

For quantitative, measurable data such as post-course Net Promoter Scores.

Understanding the different types of survey

There's no standard agreement of definition among survey types, which has led variety to balloon. Each of the below are used for different purposes, what's important is that the choice of survey is matched to a need that you have.

One really useful need is the Net Promoter Score (NPS)

The Net Promoter Score (NPS)

If it's important for us, as a business, to generate referrals, then the NPS is going to tell us if we're going to generate those referrals and if we're going to increase the number of referrals next year, or if there's a need or action we need to take to generate more.

The NPS setup is really simple and incredibly powerful. It's made up of two simple questions, one is the 'what' and one is the 'why'.

For training providers, this could relate to a course, a product, a service. It's a simple question that generates a lot of responses, and will get us the data we need. There's a number of different alternatives to this study that have been proposed over the years, but few have matched the simplicity and elegance of the NPS.

Essentially, scores of 9 or 10 can be considered promoters, and the most likely candidates to offer referrals and case studies. 7's and 8's can be considered passives, and 6 and under can be considered detractors.

Making the most of NPS

- Choose carefully when to ask the question, and what subject to ask about

- Think carefully what other data the scores can be sliced with (demographics, product, etc)

- Be consistent in delivery and do not lead survey takers - the data is more valuable than the score.

NPS is not a review score. It's for you to track over time what your referrals are going to be like, and what's really driving them. As soon as you turn it in to a review system, you're losing most of the value that you can gain from this.

Bringing it together: Turning data into action

Strategies for using your research, data and insight to make real and tangible change happen.

Research doesn't end at the point of analysis or reporting. To turn it in to action, it's important that it reaches the right people at the right time.

All to often, people only consider 3 stages in the full research process, typically collection, analysis and reporting. It's important that we acknowledge all stages in the journey. For small teams, it may well be the case that the person undertaking the research (the delegate) might undertake all three roles below (delegate, researcher and decision maker). It's important to recognise that all 3 roles are distinct, regardless how many people in might involve.

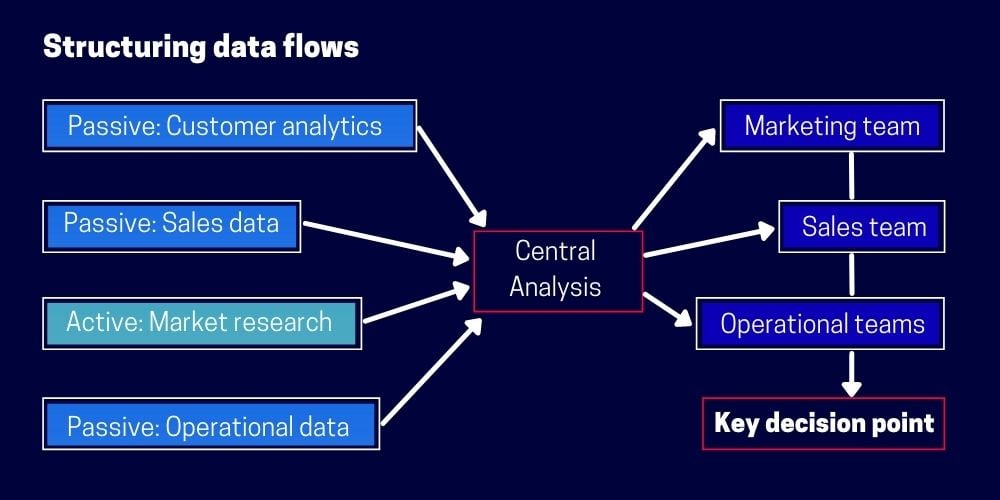

Structuring data flows

Too often, data sits in silos or with various different teams. Encourage teams to share information and build a central repository to take full advantage of what your business knows.

All of the different data sources that you have access to falls in to either:

- Passive data - customer analytics and what's stored in your CRM, sales data, experiences of your trainers

- Active data - specific data you've gone out to collect to plug the holes in our knowledge, market research

All of this data can then be brought together in to a central analysis, to ensure each topic isn't being looked at in isolation. We want to understand how the different data types build the picture of the project we're looking at, and then to distribute to the right teams.

We need to make sure this data reaches the marketing team, the sales team, the operations team - everyone who is going to be delivering the change we are looking to see.

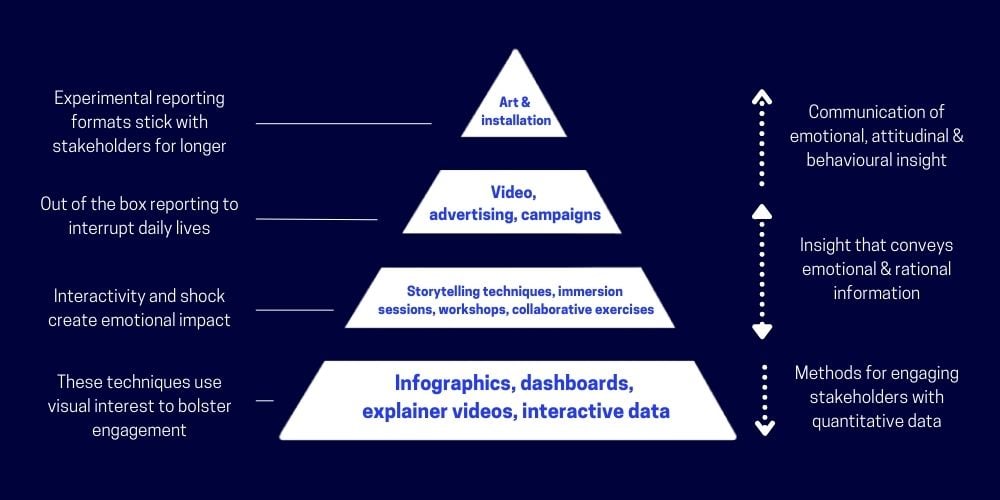

Consider the most appropriate reporting method

There are many increasingly creative and experimental ways we can get people to engage with us. Infographics, dashboards, explainer videos providing people access to data to interact with and explore themselves. We can even bring in things like storytelling techniques and immersion sessions.

By considering the full range of reporting methods at our disposal, we're able to align the right method, to the right person, to the right type of engagement, and hopefully better connect with the people who are going to enact the change that we need.

Q&A on using course data to improve your offering

Q: What are your views on using NPS to tackle detractors and the issues that detractors raise?

CHRIS: "NPS is a really useful way to, I guess, intercept detractors before it has a tangible impact on your marketing. I think NPS is a really helpful mechanism. A lot of the time, people will leave online reviews or be particularly more vocal about particularly positive or particularly negative experiences. NPS gives people an outlet to express their concerns and talk about the challenges they had in a more private forum first. I think it's worth, depending on the volume of detractors you have, trying to address each detractor individually."

"We're not necessarily looking like we would with reviews to compensate people for having a bad experience but it is important to take the time to make people feel like their concerns are being listened to and that you are going to consider their opinions going forward."

Q: How long should a typical training company spend going through course feedback?

CHRIS: "That's a really good question, and I think it really depends on the size of your offering, the variety that you have to offer the market. In general, it's going to depend on the issues and problems that you want to solve."

"If you've noticed a decline in sales, it warrants a fairly substantive investigation, particularly if it isn't fairly obvious at the surface level the effects driving that. If things are going well, it's tempting to spend less time on research, and, absolutely, if there are less issues to address, I think that's fair as well."

"I think it's worth having something in your calendar monthly to review feedback. Feedback can be a bit of a black hole, where you can sink a lot of time if you want to, so just managing that effectively, making sure you have something periodically , and I think that's probably more important than the amount of time periodically to return to it."

Q: If a training provider was experiencing overwhelmingly positive or negative feedback, what would be your typical call-to-actions from there?

CHRIS: "It's always great to have overwhelmingly positive feedback, but I think even if that is the case, it's still worth digging in to the drivers behind it. What is it you're doing differently to others that is causing that. The same with overwhelmingly negative feedback - we're all human, negative feedback can be difficult to handle, but considered from a more objective standpoint, there's useful data in there and I think that's the important thing to remember for both sides of the coin."

"It's remembering there is useful data, and using those five questions at the start - what does my business need to know, what can this feedback tell me about that."

Q: Is it expensive to run lots of research?

CHRIS: "We do typically find that there is an expectation that research is a term that is associated with bigger companies, that you need a whole research and insights department in order to get effective feedback. But, realistically, just talking to the people that have been through your training, making use of those passive data sources, is another way of managing it as well."

"Make use of what you already have - then, if you have a limited budget, there is a great deal of DIY tools and online software tools that can help with that now as well."

Access more content from Everest Virtual Training Industry Conference 2021.

Stuck on questions to ask your delegates? Check out our guide here: The 27 best training survey questions to start asking your delegates

For more amazing content like this, don't forget to subscribe to accessplanit's blog!

-1.png?width=270&height=170&name=website%20blog%20images%20(2)-1.png)